Xiaomi officially launches MiMo-V2-Pro, confirming Hunter Alpha rumors

Xiaomi finally unveiled its massive trillion-parameter AI model, confirming community suspicions that the anonymous “Hunter Alpha” on OpenRouter was actually a stealth test.

Xiaomi dropped a trio of new AI models today, including a flagship text engine, an omni-modal version, and a dedicated text-to-speech system. The launch ends weeks of speculation after developers correctly predicted that a mysterious, highly capable model lurking on OpenRouter was actually a secret Xiaomi project.

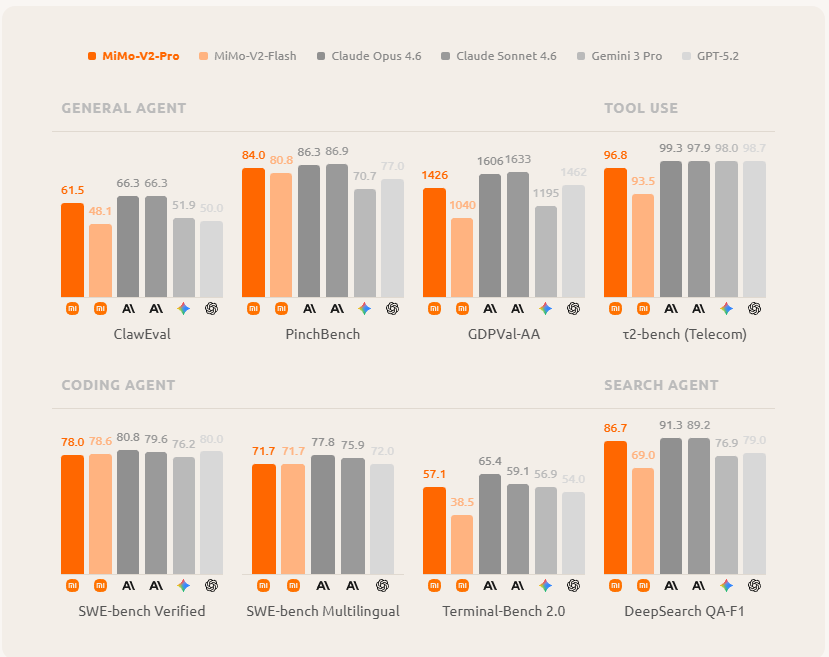

- The flagship model: MiMo-V2-Pro is a closed-weight, trillion-parameter LLM heavily optimized for real-world tool use and complex agentic workflows.

- Multimodal expansion: The company also released MiMo-V2-Omni for image, video, and audio understanding alongside a natural-sounding text-to-speech model.

- The benchmark reality: The Pro model scores 49 on the Artificial Analysis Intelligence Index, placing it just below competitors like GLM-5 but scoring exceptionally well on agent-specific tests.

The open-source bait and switch: While Xiaomi previously earned community goodwill by open-sourcing its earlier MiMo-V2-Flash model, these new flagship models are strictly proprietary at launch. The company claims it will release open weights only when the models are deemed stable enough.

- Global availability: Developers can access the models right now through a web playground and API at the official Xiaomi portal.

- Fast integration: The model was immediately integrated into tools like TokenRing Coder to capitalize on its high coding and planning benchmark scores.

The Bottom Line: Xiaomi successfully used a ninja alias to build hype and validate its trillion-parameter model before the official launch. While the benchmark scores are highly competitive, locking the weights behind an API means developers will have to wait to see if the company honors its open-source promises.

You can try MiMo-V2-Pro right now.

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.