NVIDIA’s Kimodo generates studio-quality 3D motion from text, and it’s free

NVIDIA released a free text-to-motion tool trained on 700 hours of professional mocap data. Just type a prompt and get a studio-quality 3D animation in seconds.

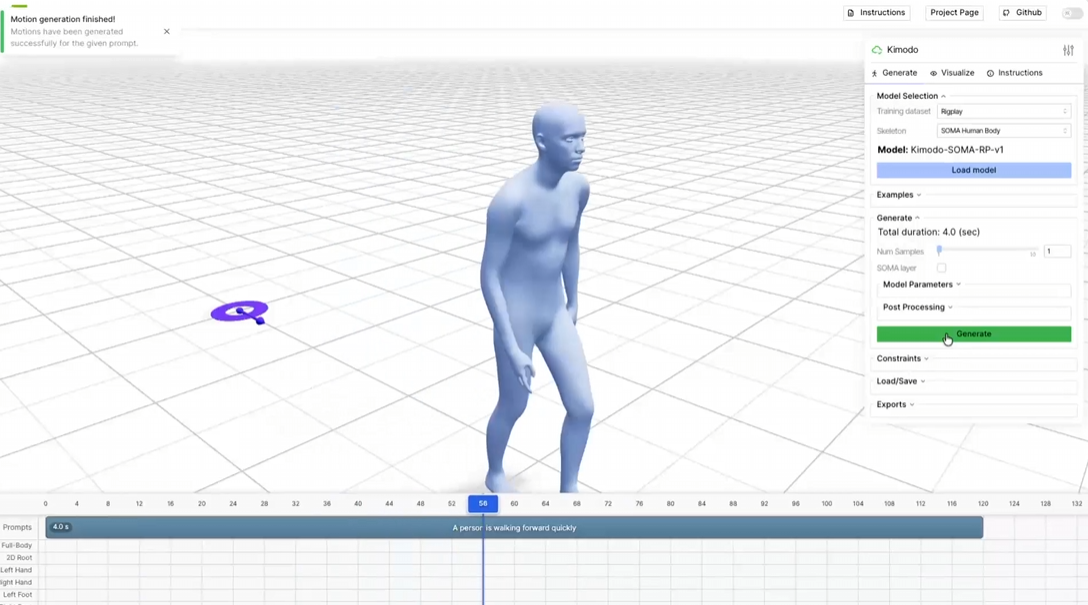

NVIDIA Research released Kimodo last week, a text-to-motion model that generates 3D skeletal animations for both human characters and humanoid robots from simple text prompts. It is available now on Hugging Face with no installation required, and the full code is open-source under Apache 2.0.

The quality gap between Kimodo and earlier text-to-motion tools comes down to data. Most public motion capture datasets run to around 70 hours of recorded movement. Kimodo was trained on Bones Rigplay, a proprietary studio dataset containing 700 hours of professional optical mocap footage — roughly ten times the typical scale.

- The result: Clean, physics-aware animations generated in two to five seconds on an RTX 3090, with significantly fewer floating and foot-skating artifacts than previous models.

The model goes beyond basic text prompts. Prompts can be layered sequentially or simultaneously — “walks forward” into “waves arms while jogging” — with style modifiers like tired, drunk, or combat. Users can also pin specific hand and foot positions, full-body keyframe poses, and 2D movement paths directly into the generation process.

- The robot side: A separate model variant outputs animations directly onto the Unitree G1 humanoid skeleton, in MuJoCo CSV and Isaac Sim formats. That makes it practical for generating large volumes of motion training data for humanoid robots without recording a single physical demonstration.

For anyone looking to run it locally, here is what to know:

- VRAM requirement: ~17 GB. The Hugging Face demo has no hardware requirement.

- Export formats: NPZ, MuJoCo CSV, Isaac Sim, and ProtoMotions.

- License: Apache 2.0 for the code. Model checkpoints are licensed separately on Hugging Face.

The Bottom Line: Text-to-motion has existed for a while, but the results were rarely good enough to use in production. Kimodo’s dataset scale changes that calculation, and the free availability means game studios, robotics researchers, and individual developers are all working with the same tool starting now.

Check it out: NVIDIA Research / GitHub / Hugging Face

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.