Claude Code may be burning your limits with invisible tokens

A developer’s experiment suggests Anthropic may be silently injecting thousands of tokens into Claude Code requests, and users’ usage limits are taking the hit.

Claude Code users have been complaining for weeks that their usage limits are disappearing faster than they should. Even on the Max 20x plan at $200 per month, users report hitting their quota within hours of active sessions, sometimes in as little as 90 minutes.

The complaints have been widespread enough that Anthropic acknowledged them, noting that users were hitting usage limits in Claude Code faster than expected. No clear explanation followed.

Limits are shared across all Claude interfaces, so running Claude Code alongside regular chat accelerates the burn. Heavy sessions on large repositories compound it further. But the math still didn’t add up for a lot of users, and the community started digging.

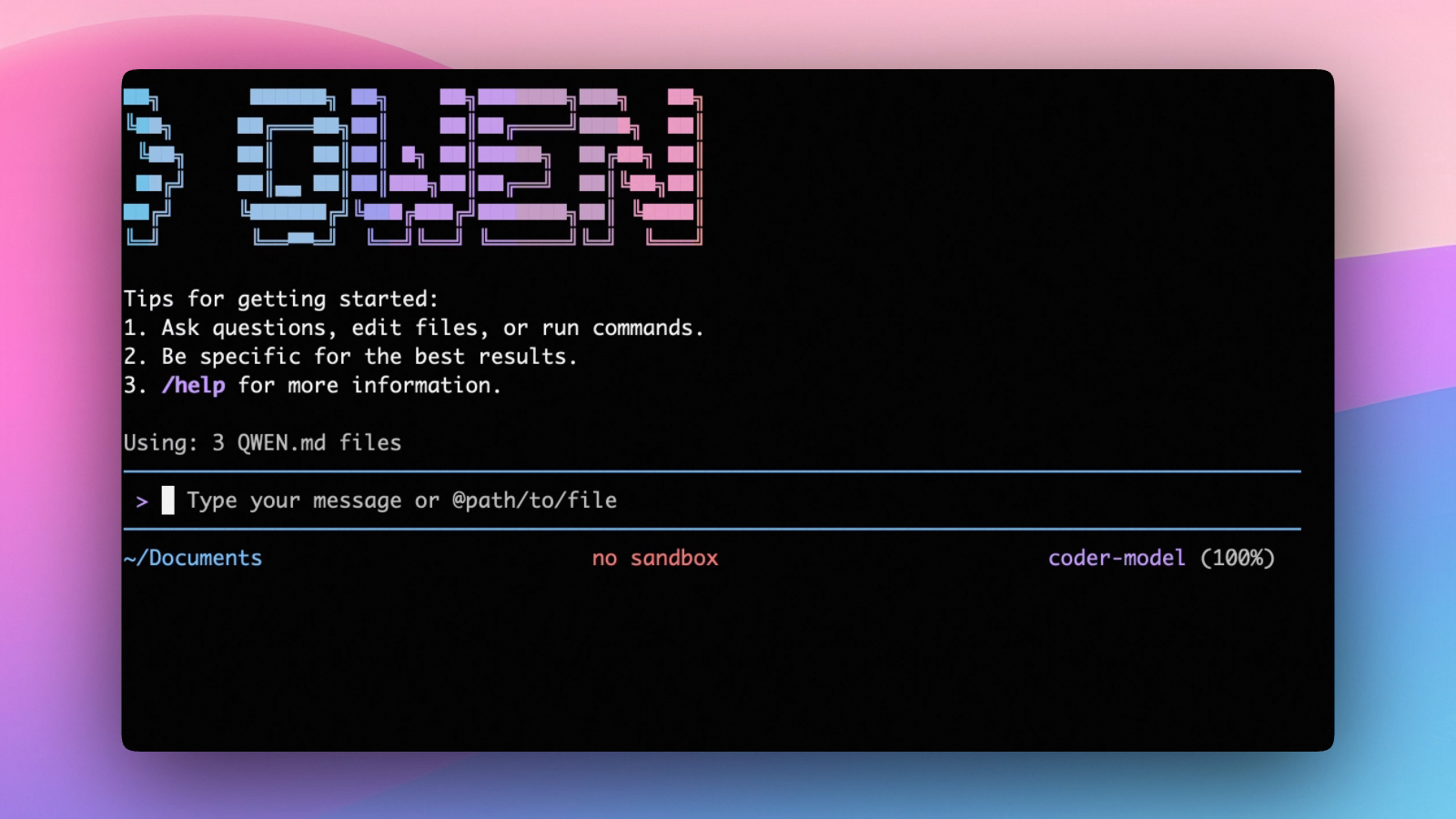

One developer set up an HTTP proxy to capture full API requests across multiple Claude Code versions and found something that would explain the gap. Starting with v2.1.100, every request appears to carry around 20,000 extra tokens that the user never sent.

The numbers came from the same project and the same prompt across versions. v2.1.98 billed 49,726 tokens. v2.1.100 billed 69,922. The v2.1.100 request was actually smaller in bytes sent from the client, which rules out the user side as the source.

The inflation is server-side. It doesn’t show up in the CLI’s /context view or anywhere users can audit it. Nothing in Anthropic’s changelogs explains it.

This hasn’t been independently verified at scale, and Anthropic hasn’t commented on it. Multiple users have since reported the same delta after running their own proxy tests, and the finding has circulated widely on X and Reddit.

Community speculation points to expanded session memory features introduced in v2.1.100, such as summary injection or additional tool schemas. Whether intentional or a bug, the effect is the same.

Those extra tokens don’t just affect billing. They enter the model’s actual context window, diluting custom instructions set in CLAUDE.md and degrading output quality faster in long sessions. When Claude starts ignoring project rules, there’s currently no way to tell if injected context is the cause.

The workaround circulating on X and Reddit is downgrading to v2.1.98 via npx claude-code@2.1.98.

The token inflation finding landed on top of an already frustrating month for Claude Code users. On April 4, Anthropic removed the ability to use Claude subscription limits for third-party tools.

OpenClaw, an open-source autonomous AI agent that many users run on top of their Claude subscription, was among the tools affected. Usage through those tools now draws from a separate pay-as-you-go add-on billed outside the subscription.

Anthropic offered affected users a one-time credit equal to their monthly subscription to ease the transition.

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.