Z.ai releases GLM-5-Turbo to fix broken coding agents

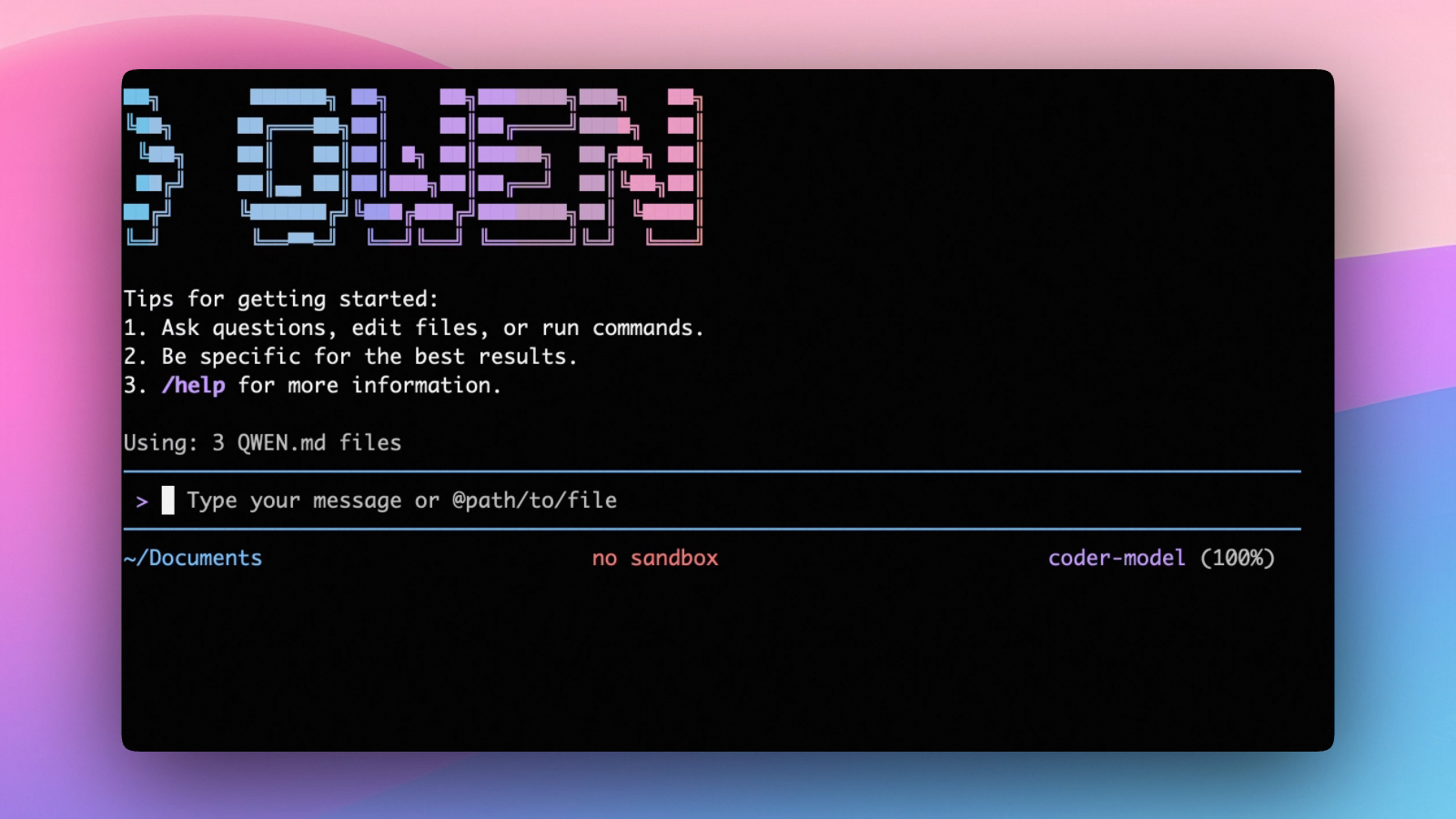

Z.ai just launched a closed-source variant of its flagship model. GLM-5-Turbo is built specifically to stop autonomous coding agents from stalling during long execution loops.

Chinese AI developer Z.ai officially released GLM-5-Turbo. This high-speed model is optimized specifically for multi-step, agent-driven coding workflows.

Key Takeaways:

- The core utility: The model features a 200,000-token context window. It is engineered to maintain stability and parse complex instructions during extensive agent workflows.

- The early reception: Developers are already testing the model in environments like OpenClaw, Cursor, and Cline. Early community reactions are calling it a “200k token coding agent monster.”

- The rollout: Pro tier subscribers have immediate access. Lite users will receive access to the base GLM-5 this month. Turbo access for Lite users is delayed until April.

- The cost: The model is available directly through the Z.ai API documentation or via OpenRouter. OpenRouter pricing sits at $0.96 per million input tokens and $3.20 per million output tokens.

What They Said:

“A 200k token coding agent monster.” — Early community reactions across English, Chinese, and Turkish developer forums.

The Bottom Line: GLM-5-Turbo is a direct attempt to solve the exact speed and reliability issues that currently break autonomous coding agents at scale.

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.