OpenAI brings parallel subagents to Codex developers

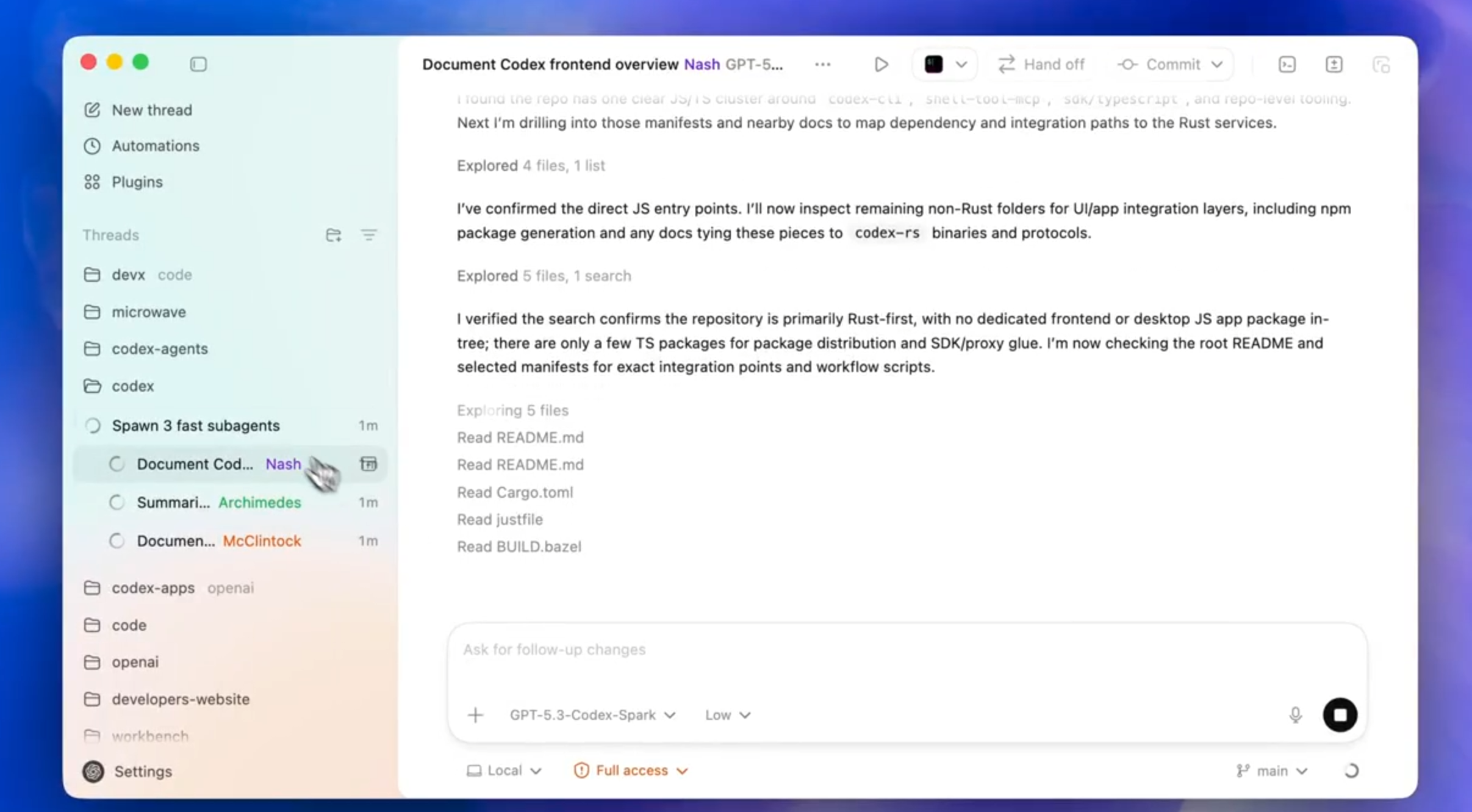

Developers can now deploy multiple AI assistants simultaneously. This update allows users to split complex coding projects across specialized agents without overwhelming the main context window.

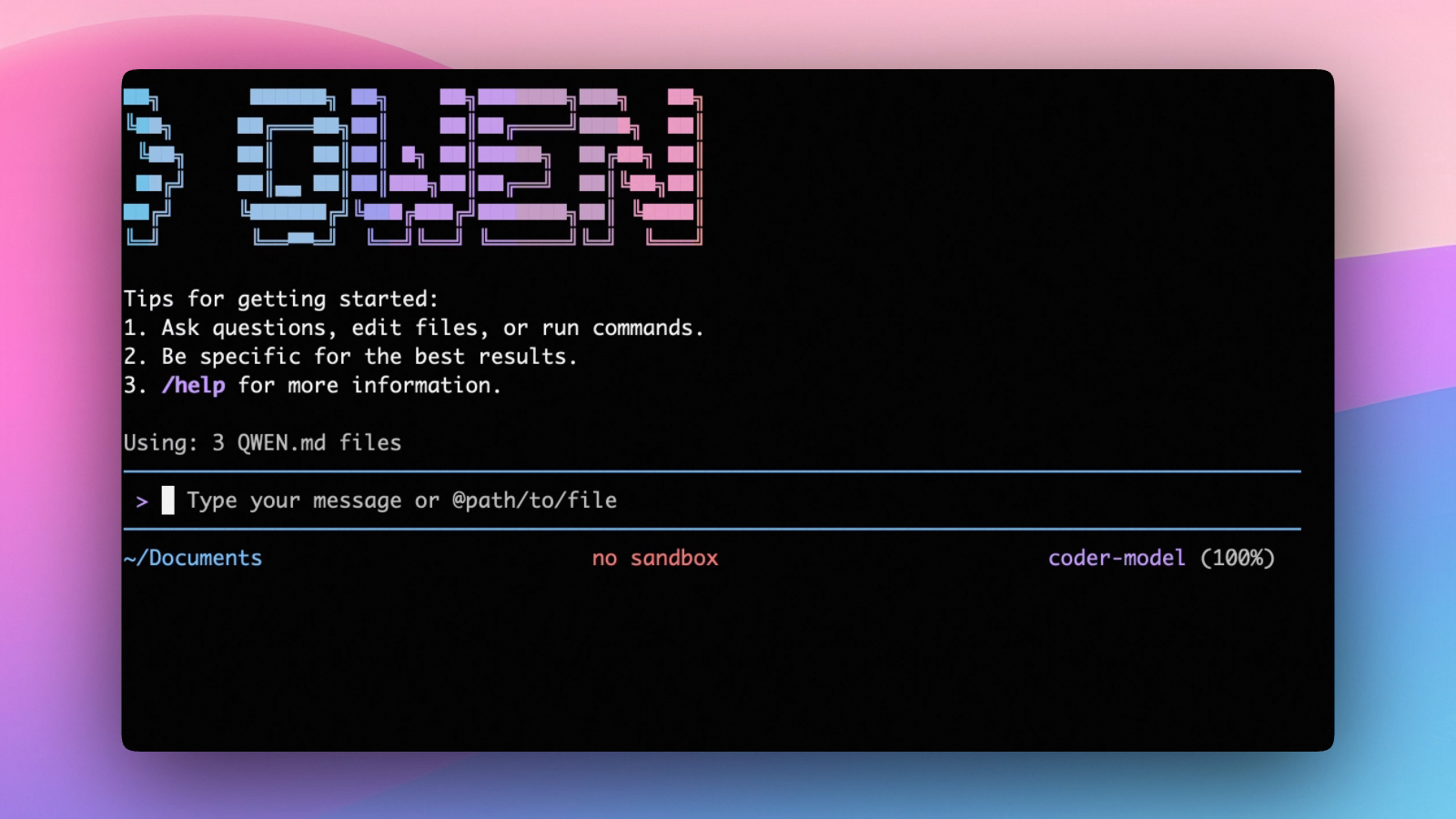

OpenAI officially rolled out subagents for its Codex platform. The feature is currently live for all users across the web application and the command-line interface.

Key Takeaways:

- The core utility: Developers can spawn dedicated agents for specific roles like frontend design or security review. These subagents execute their assigned tasks in parallel.

- The context advantage: Subagents operate inside isolated threads. This architecture prevents token explosion and keeps the primary conversation completely focused.

- The control mechanics: Users can jump into any active subagent thread. Developers can steer the AI in real time and approve intermediate outputs before moving forward.

- The customization: Developers define custom agent personalities and model choices using simple TOML configuration files. Users can assign heavy reasoning models to planning tasks and deploy faster models for basic execution.

- The availability: The feature requires no experimental flags and is generally available right now. Developers can review the official documentation directly at the OpenAI portal.

The Bottom Line: OpenAI is transforming Codex from a simple code completion tool into a comprehensive multi-agent development environment.

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.