MiniMax releases open weights for M2.7, but the license is a catch

MiniMax just released the open weights for M2.7, about a month after the model’s initial launch. But the model isn’t really open-source.

MiniMax just released the weights for its M2.7 model on Hugging Face and GitHub, roughly one month after the model’s initial launch in March.

As we covered when M2.7 launched, the model targets software engineering, agentic workflows, and office productivity tasks. It runs on a sparse Mixture-of-Experts architecture with 230B total parameters and around 10B active per token, with a 200k token context window.

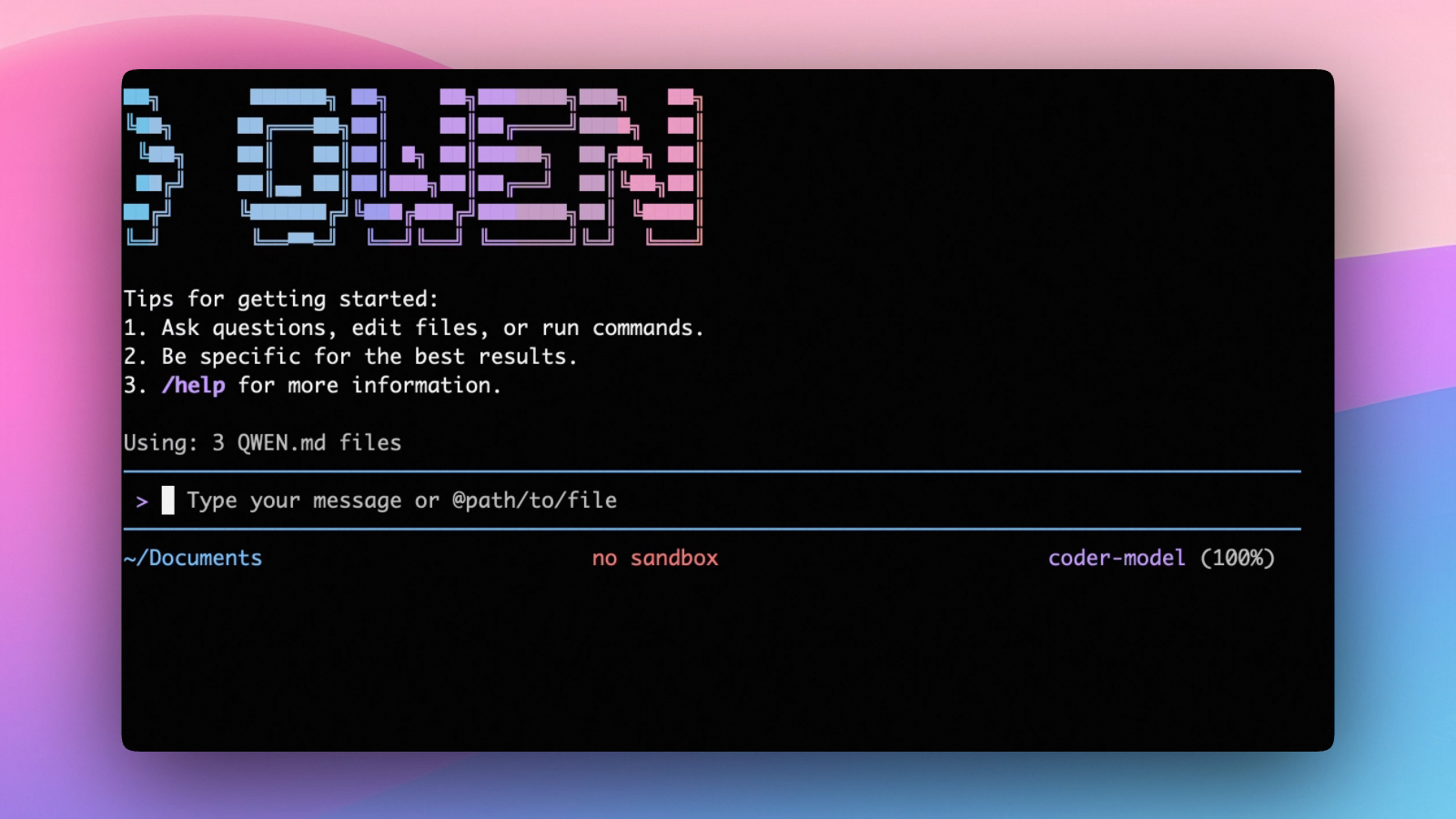

The weights are compatible with vLLM, SGLang, Transformers, Ollama, and llama.cpp. GGUF quantizations are available, ranging from around 60GB at 1-bit to 457GB at BF16.

Among the things compatible inference setups can handle:

- Local deployment: Ollama and llama.cpp support, with GGUF quants covering a range of hardware configs

- Framework support: vLLM and SGLang for production inference setups

- API access: remains available via MiniMax’s platform at $0.30/M input and $1.20/M output

The license is where things get complicated. M2.7 ships under a modified MIT license that restricts commercial use. Any deployment involving monetary compensation, paid services, or internal profit-oriented use requires prior written authorization from MiniMax. That’s a reversal from M2 and M2.5, both of which shipped under a standard MIT license with commercial use allowed.

MiniMax initially announced the release as open source. After community pushback, the company walked that back and clarified the correct term is open weights.

The community noticed. Threads on r/LocalLLaMA and Hacker News have been consistent in labeling it “not open source,” with the commercial restriction drawing most of the criticism.

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.