MiniMax announces M2.7 AI model with self-reported benchmark gains

MiniMax just rolled out its M2.7 AI model.

The company heavily markets the system as a self-evolving entity, but the actual technical details reveal a highly capable model with strong internal benchmark scores.

MiniMax officially announced its new M2.7 AI model today through a detailed WeChat post. The announcement heavily markets the system as a self-evolving entity capable of automating its own research and development. The technical reality looks much more like a highly optimized automated testing loop.

Key Takeaways:

- The self-learning claims: The marketing pushes the idea of recursive self-evolution. The actual system runs reinforcement learning experiments to autonomously tweak its own parameters. This framing acts as marketing flair for a genuinely advanced optimization loop.

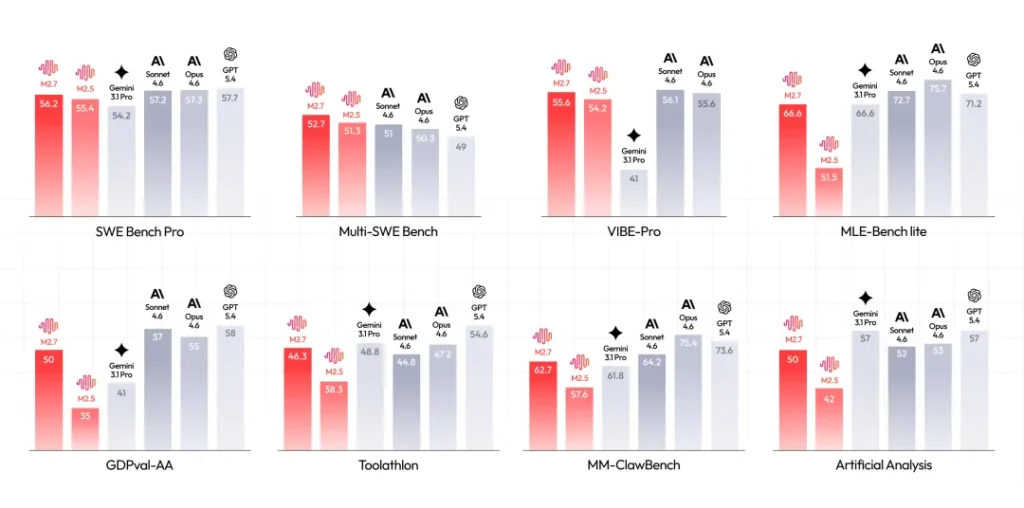

- The benchmark reality: The model scored an impressive 56.22 percent on the SWE-Pro evaluation. These numbers come directly from the official WeChat announcement. The scores remain entirely self-reported internal runs rather than verified public leaderboard results.

- The engineering performance: Previous models in this family scored in the 36 to 55 percent range on public leaderboards. Early social media users are already confirming the strong performance and new software integrations.

- The immediate availability: Developers can access the model right now through the official web agent and an Anthropic-compatible API. The ecosystem also includes a dedicated high-speed variant for latency-sensitive coding applications.

- The open-source expectation: The company has not yet released the open weights for M2.7. The community expects a public release on Hugging Face soon based on the history of previous versions.

The Bottom Line: MiniMax launched a highly capable model with impressive internal benchmarks. Independent public evaluations will eventually verify these impressive marketing claims.

Check out the full announcement (in Chinese)

If you need on-demand GPUs for training, fine-tuning, inference, or running open-source models, give RunPod a try.

- Available hardware: H100, H200, A100, L40S, RTX 4090, RTX 5090, and 30+ more

- Cost: significantly cheaper than AWS or GCP, billed per second, no contracts

- Setup: spins up in under a minute, 30+ regions worldwide

Get the core business tech news delivered straight to your inbox. We track AI, automation, SaaS, and cybersecurity so you don't have to.

Just read what you want, and be done with it.